Agentic Diagnostic Tools

Engineering Trustworthy AI Advisory Systems for Complex Operations

Valuable enterprise AI systems are not the ones that produce the most fluent answers. They are the ones that create reliable, reviewable, and decision-grade outputs from imperfect real-world data.

That distinction matters in supply chain and manufacturing environments. The raw material for analysis is rarely clean. Operational data arrives in heterogeneous spreadsheets, documents, reports, and plant-specific formats. Business meaning is often implicit. Definitions vary by site. Exceptions matter.

A generic AI assistant can help reason about this information. An advisory-grade system must do more: structure it, validate it, preserve lineage, support expert review, and convert ambiguity into defensible decisions.

That is the engineering challenge this platform addresses.

The Core Engineering Philosophy

The platform is built around a simple architectural principle: AI should reason over ambiguity, while the surrounding system preserves certainty wherever possible.

In practical terms, the model response is not treated as the finished product. The AI operates inside a governed workflow that produces durable, typed artifacts: assessments, classified operational tables, benchmark ranges, diagnostic outputs, and action-oriented recommendations.

The result is a system that combines model flexibility with software engineering discipline.

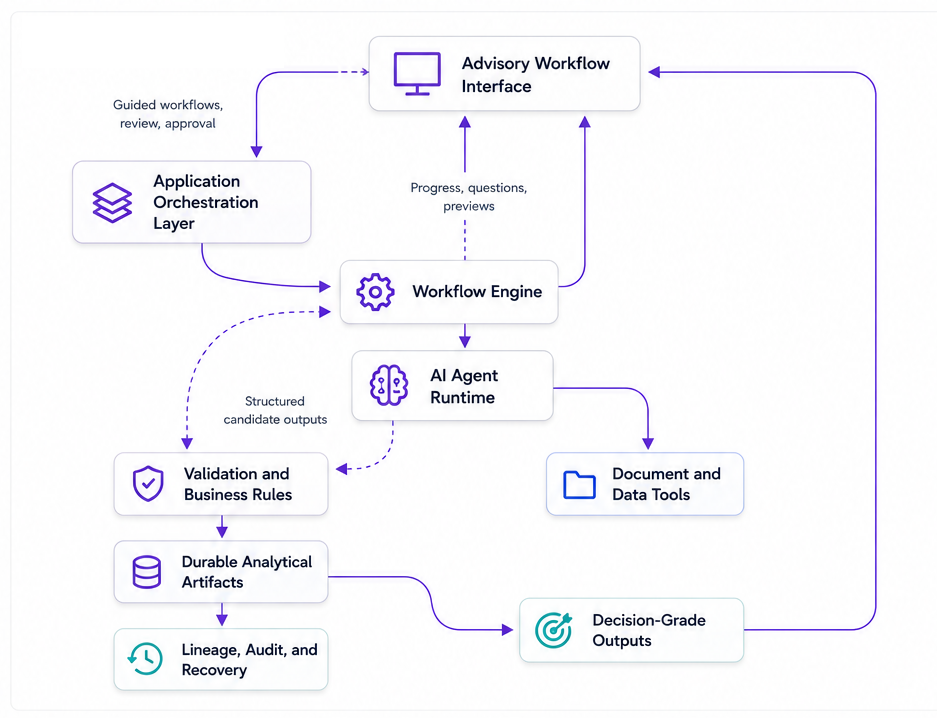

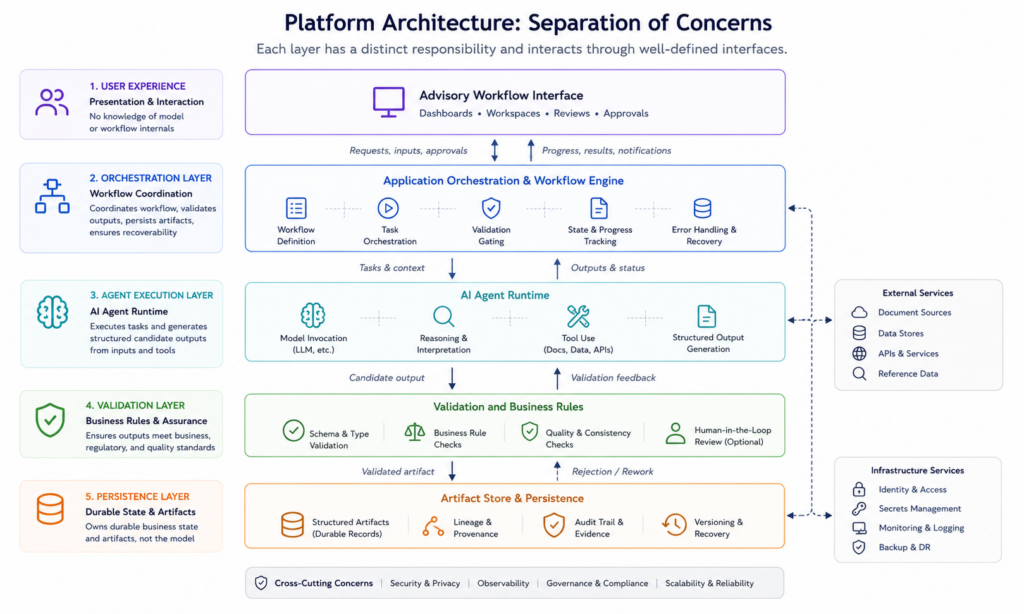

Reference System Design

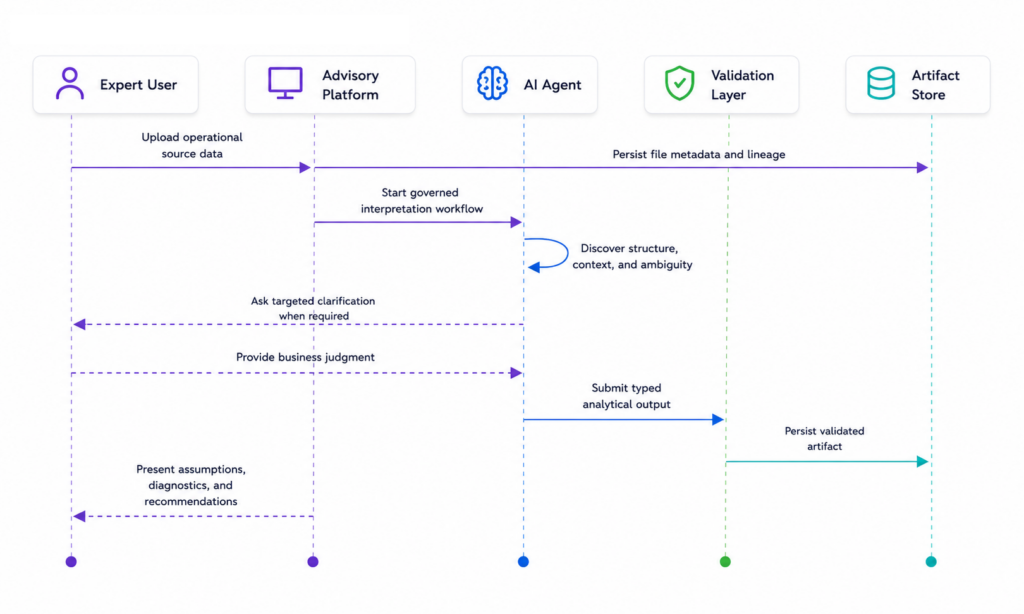

At a conceptual level, the platform separates user experience, orchestration, agent execution, validation, and persistence.

This separation is intentional. The user interface should not need to understand model mechanics. The agent runtime should not own durable business state. The orchestration layer coordinates the workflow, validates outputs, persists artifacts, and ensures the process remains recoverable.

That architecture gives the system room to use advanced AI capabilities without making the model the only control point. The model can interpret messy inputs, but structured validation and artifact persistence determine what becomes part of the business record.

Use AI Where It Adds Judgment, Use Code Where It Adds Certainty

One of the most important engineering disciplines in an AI advisory platform is deciding where not to use AI.

Models are strongest when the task involves ambiguity: interpreting messy source material, extracting meaning from unstructured evidence, identifying contradictions, synthesizing findings, or helping an expert reason across incomplete information. Deterministic software remains the better tool for calculations, schema validation, authorization, routing, versioning, accounting logic, status transitions, and persistence.

The practical pattern is hybrid: deterministic code prepares bounded context, the AI performs the semantic step, and deterministic validation checks the output before anything durable is written.

| Responsibility | Preferred Mechanism | Reason |

|---|---|---|

| Ambiguous interpretation | AI-assisted workflow stage | Requires language understanding, judgment, or synthesis |

| Calculation and normalization | Deterministic code | Requires repeatability and auditability |

| Business approval | Human validation | Requires accountable expert judgment |

| Persistence and lineage | Application and data layer | Requires durable, explainable state |

Data Flow: From Raw Input To Decision Artifact

The data flow follows a staged pattern designed for traceability. Source files are first captured with metadata and lineage. The AI agent then performs discovery, extraction, classification, or synthesis depending on the workflow. When confidence is insufficient, the system brings an expert into the loop through targeted clarification rather than hiding uncertainty behind a confident answer.

This is not a one-shot prompt pattern. It is an iterative, interruptible, and reviewable workflow. That distinction is critical for enterprise use cases where the output may influence operational priorities, financial estimates, or executive recommendations.

Human Validation Is A Product Boundary

In advisory workflows, human review is not a late-stage quality check. It is a first-class product boundary.

The system should make clear what the AI inferred, what the expert accepted, what the expert changed, and which downstream outputs are now safe to trust. This requires explicit validation states, not informal comments or hidden edits. A durable decision record is what turns AI assistance into an accountable workflow.

The most useful validation moments are usually source relevance, entity mapping, preview review, assumption approval, and final sign-off. Each one narrows uncertainty before the system moves into a more expensive or more consequential stage.

Why Durable Artifacts Matter

Long-running AI workflows are inherently fragile. Conversations can become large. Tool state can expire. Intermediate reasoning can become difficult to replay. Users may disconnect, return days later, or revise assumptions after expert review.

The platform therefore treats durable artifacts as checkpoints. The conversation is useful context, but the structured artifact is the source of truth.

This approach supports a stronger recovery model. If a session is interrupted, the system can resume from the latest validated artifact. If an output needs revision, the workflow can update the artifact rather than replaying the entire conversation. If an executive challenges a recommendation, the team can trace it back to the source data, assumptions, and validation path.

The Operating Model For Model Calls

Production AI systems need model operations discipline. The question is not only whether the model can produce a good answer; it is whether the platform can control cost, latency, reliability, provenance, and failure behavior across many real workflows.

Every material model call should have an explicit timeout, bounded retry policy, structured output contract, token budget, and provenance trail. For durable outputs, the system should be able to explain which model family, prompt family, source files, rubric or benchmark version, and user overrides contributed to the result.

This turns model usage from an implementation detail into an operationally managed capability.

Production Readiness Means More Than A Successful Demo

A successful demo proves that the model can complete the happy path. A production-grade system proves that the product can recover when the happy path breaks.

That means persisted job state, explicit partial-failure states, safe re-runs, representative test fixtures, structured observability, and clear cancellation or recovery semantics. It also means distinguishing operational telemetry from sensitive content. The platform should measure what happened without logging full source documents or full prompts by default.

| Production Concern | Engineering Response |

|---|---|

| Long-running workflows | Persist stage, status, timestamps, warnings, and recoverable checkpoints |

| Partial failure | Represent degraded, waiting, cancelled, stale, and failed states explicitly |

| Quality regression | Use deterministic tests, representative file fixtures, golden outputs, and LLM evaluations |

| Cost and latency | Track token estimates, duration, retry count, and per-stage concurrency |

| Sensitive data | Keep provider access server-side and avoid raw prompt or document logging by default |

Benefits Of This Approach

- Trust: Outputs are structured, inspectable, and connected to evidence or assumptions.

- Traceability: Recommendations can be followed back to source data, intermediate artifacts, and expert decisions.

- Scalability: Once the workflow pattern is established, new diagnostic domains can reuse the same architectural foundation.

- Resilience: Persistent artifacts allow workflows to recover from interruptions, validation failures, and user delays.

- Better human-AI collaboration: The AI handles high-volume interpretation, while experts focus on judgment, exceptions, and final validation.

- Decision quality: The system can prefer ranges over false precision, surface uncertainty, and connect recommendations to operational drivers.

Tradeoffs

This architecture is more complex than a simple chat interface. It requires workflow orchestration, schema design, validation layers, artifact versioning, file handling, and careful state management.

It also requires stronger product discipline. Every workflow needs a clear definition of the final artifact, which fields are editable, which steps require validation, and where human judgment belongs.

There is a tradeoff around speed as well. A governed workflow may take longer than a single model response. But for enterprise advisory use cases, the additional structure is usually worth it. The objective is not instant prose. The objective is a reliable decision artifact.

Finally, this approach intentionally constrains model flexibility. Outputs are shaped by schemas, domain workflows, validation rules, and expert review. In high-stakes operational analysis, that constraint is a strength. Repeatability, lineage, and controlled review are more valuable than unconstrained generation.

There is also an operational tradeoff. Higher throughput requires concurrency controls, provider policy, token budgeting, and careful handling of long-running jobs. Those controls add engineering overhead, but they are what allow the platform to scale beyond isolated expert usage.

The Engineering View

From an Engineering perspective, the most important design choice is not which model powers the system. Models will continue to evolve. The durable advantage is the architecture around the model.

A strong AI advisory platform needs typed boundaries between model output and application state. It needs human-in-the-loop review at points of uncertainty. It needs persistent artifacts that survive workflow interruptions. It needs clear lineage from raw input to recommendation. It needs domain-specific validation rather than generic summarization. And it needs recovery paths that do not depend on perfect model memory.

That is the difference between an impressive AI demo and an enterprise-grade AI system.

This platform is built for the latter: a system that helps experts move faster while preserving the rigor required for operational and financial decisions.

Technologies

- Python

- FastAPI

- SQLAlchemy

- Alembic

- Pydantic

- PostgreSQL

- OpenAI API

- Anthropic Claude API

- Claude Agent SDK

- LangGraph

- LangChain

- Next.js

- Tailwind CSS

- Okta

- AWS

- Docker

- Kubernetes

- Helm